Some time ago, we brought a guide to designing a better survey structure and how to avoid making various survey mistakes. In this blog, I want to address the fact that no matter how much you try, your survey will almost always contain bias in one way or the other. However, there are some things you can focus on to minimise survey bias. But first let’s look at the bias pitfalls. In the following, we are solely considering surveys in the physical space, like a survey station or exit interviews/surveys.

How do you present your survey?

If you are running a kiosk-based survey, maybe fixed in a tablet case or a kiosk stand which is placed conveniently somewhere, then solely because of the technology that you choose, you are creating a certain bias, often related to either age or gender.

In the same way, if people complete your survey via exit interviews, then it’s pretty much the same deal. In any survey where you approach the respondent to learn about their experience or get their feedback, there’s bias. And it’s two-fold: The interviewer will consciously or subconsciously select her respondents from certain criteria (other than the ones defined in pre-segmentation). This could either be the personal appearance, personality type or something completely different. But the respondent’s answer also depends on the interviewer. For example, if the interviewer is a young extrovert with a lot of energy as opposed to a person who is less engaging, then responses may vary.

So, no matter if you are doing your on-site surveys via interviews or survey stations, there’s bias!

Where do you place your survey?

An entirely different issue is where people complete your survey. Assume that you are bringing your car in for service. Do you think that your answers would differ, if you took the survey while in the waiting area, drinking coffee or if you were on your way out of the dealership? Chances are that you would make more of an effort to give a more accurate and descriptive answer, if you were sitting in the waiting area, right? I mean, if you’re heading out the door, you’re not going to invest as much time in answering because mentally you are already leaving the establishment.

Most organisations are interested in the service experience and hence they want to ask their customers when the experience is complete, but there’s always a trade-off between how much data you will get vs. how full a response you get.

Survey design is important in order to minimise survey bias

The survey design itself is important. How you ask your questions, what conditional clauses are built into the questions etc. – i.e. is the respondent even able to answer the question properly?

Firstly, simply putting the survey questions in an order which is logical for the respondent is important. For instance, if the survey is about a service experience, then it’s important to ensure that the respondent has had an experience of some kind, meaning have they browsed, purchased, or been in contact with service staff. Therefore, it will always be key to ensure that the respondent has the fundamental ability to answer a given question. You can solve this problem easily by filtering the respondents so that they only see with relevant questions. In tabsurvey, we ensure this by introducing a simple flow in the survey.

Question phrasing

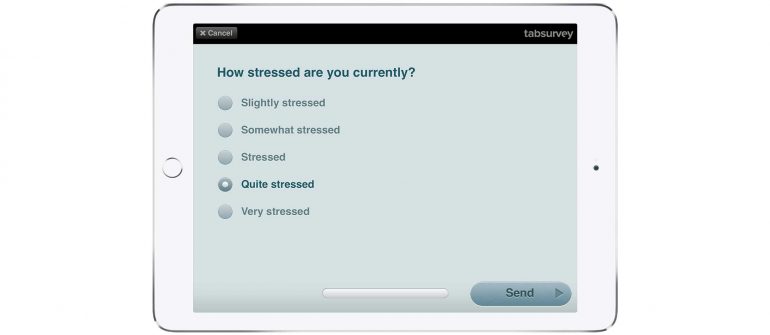

Another typical pitfall in a survey is the question phrasing. Phrasing a question with bias, is unfortunately quite common. Consider an employee survey, that examines employee satisfaction and maybe the level of stress in the organisation. Now consider the question: “On a scale from 1 to 5, how stressed do you currently feel?”. Well, the employee who doesn’t feel stressed at all, has no way of answering this question accurately. Even a 1-score, will indicate that she feels a little stressed out. So, the lesson is to be attentive to the phrasing of the question, as it otherwise won’t minimise survey bias.

The right predefined choices

Along the same lines, creating a question with predefined choices (single or multiple choice questions) can create bias. It could be as simple as “Where did you hear about us?” followed by a list of choices. But if the respondent’s preferred choice is not among them, then there’s bias. The solution is to always have an “Other” field, where the respondent can fill in a text-based answer. It may seem trivial, but nonetheless the “Other” choice is often not included in the list of choices. As a result, the respondent is unable to express her true opinion.

So, there it is. Use these simple guidelines when you conduct your surveys and you’re sure to minimise survey bias to the extent possible.